Responsible AI: Ensuring Ethical and Accountable Technology

Trading Algorithms and AI in Finance

Smart Cameras: Keeping Your Home Safe and Secure

Responsible AI: Ensuring Ethical and Accountable Technology

Have you ever joked with your colleagues about being watched by AI at work? It’s a common fear among employees, but the reality is that AI is becoming more integrated into our workplaces and daily lives. As someone who has experienced this fear firsthand, I understand the importance of Responsible AI in ensuring ethical and accountable technology. In this article, we will explore the ethical implications of AI, the role of government and regulation, and best practices for businesses to implement Responsible AI.

Table of Contents

Introduction

Artificial intelligence (AI) has rapidly become ubiquitous in our lives, from virtual assistants to predictive analytics for businesses. However, as AI becomes more advanced and integrated into our society, there are growing concerns about the ethical implications of its development and use. It is essential to ensure that AI is developed and deployed responsibly, with ethical considerations at the forefront of decision-making. In this article, we will explore what Responsible AI means and why it is crucial for the future of technology.

Importance and Definition

Responsible AI refers to the development, deployment, and use of AI in an ethical and accountable manner. It ensures that AI systems are transparent, explainable, and unbiased, protecting individual privacy and data. Responsible AI also requires ensuring that AI systems are accountable for their decisions and actions. This means that individuals and organizations responsible for AI’s development and deployment must take responsibility for its outcomes.

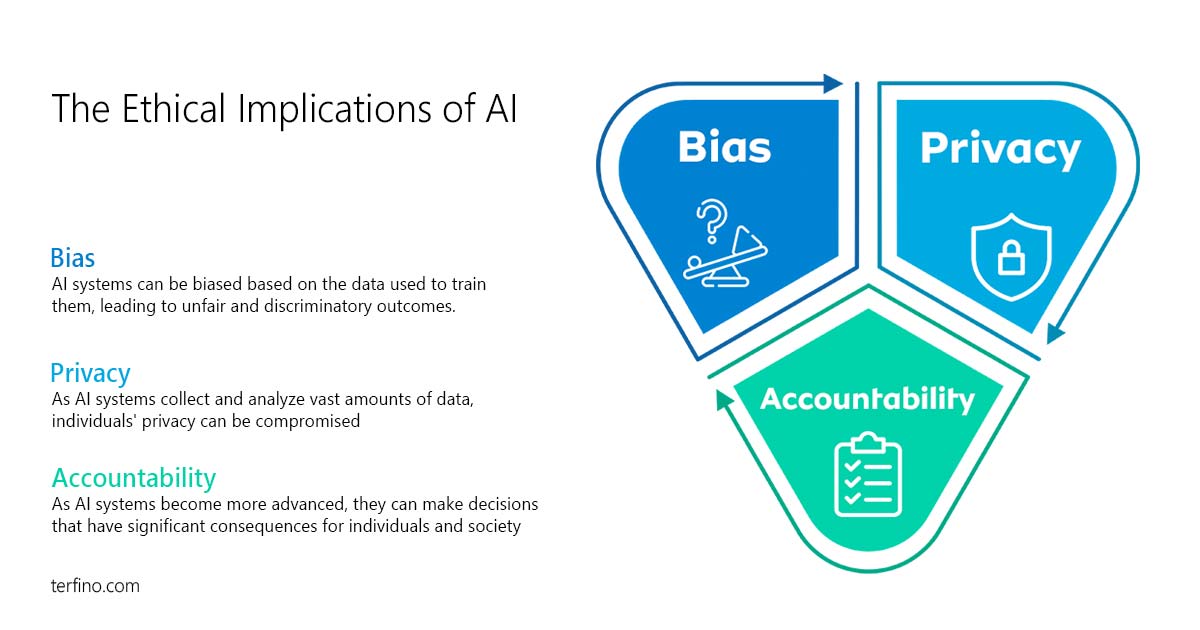

The Ethical Implications of AI

Bias: AI systems can be biased based on the data used to train them, leading to unfair and discriminatory outcomes. For example, facial recognition software is less accurate when identifying individuals with darker skin tones, which can lead to discrimination in law enforcement and other areas.

Privacy is another critical ethical concern in AI. As AI systems collect and analyze vast amounts of data, individuals’ privacy can be compromised. It is essential to ensure that AI systems protect individual privacy and data while allowing for innovation and progress.

Accountability is crucial in AI decision-making. As AI systems become more advanced, they can make decisions that have significant consequences for individuals and society. It is essential to ensure that AI systems are accountable for their choices and actions and that individuals and organizations responsible for their development and deployment take responsibility for their outcomes.

The Role of Government and Regulation

Governments around the world are beginning to recognize the importance of Responsible AI. Many countries have developed initiatives to promote ethical AI practices, such as the European Union’s General Data Protection Regulation (GDPR) and the United States National AI Initiative. Regulation is necessary to ensure that AI is developed and deployed responsibly and that ethical considerations are at the forefront of decision-making.

Examples of regulatory frameworks for AI in different countries include the GDPR in the European Union, which aims to protect individuals’ privacy and data, and the Algorithmic Accountability Act in the United States, which seeks to ensure that AI systems are transparent and accountable for their decisions.

here’s government and regulatory framework for Responsible AI in different countries:

| Country | Government Initiative / Regulatory Framework |

|---|---|

| European Union | General Data Protection Regulation (GDPR) |

| United States | National AI Initiative, Algorithmic Accountability Act |

| Canada | Directive on Automated Decision-Making |

| Singapore | Model AI Governance Framework (pdf) |

| United Kingdom | Centre for Data Ethics and Innovation |

Implementing Responsible AI in Business

Businesses must also prioritize Responsible AI. Best practices for implementing ethical AI in business include:

- Ensuring that AI systems are transparent, explainable, and unbiased: This means that AI systems should be designed to make their decision-making process clear and understandable to the people who use them. Transparency and explainability help to build trust in AI systems by allowing individuals to understand how decisions are made and to challenge those decisions if necessary. Unbiased AI systems are free from discrimination or prejudice, ensuring they treat all individuals fairly.

- Protecting individual privacy and data: As AI systems collect and analyze vast amounts of data, protecting personal privacy and data is essential. This includes implementing measures such as data encryption, access controls, and data minimization, limiting the amount of data AI systems collect and store. Protecting individual privacy and data is crucial to maintaining trust in AI systems and ensuring that individuals feel comfortable using them.

- Ensuring that AI systems are accountable for their decisions and actions: As AI systems become more advanced, they can make decisions that have significant consequences for individuals and society. It is essential to ensure that AI systems are accountable for their choices and actions and that individuals and organizations responsible for their development and deployment take responsibility for their outcomes. Accountability helps to ensure that AI is developed and deployed responsibly, with ethical considerations at the forefront of decision-making.

Many companies have successfully implemented ethical AI practices. For example, IBM has developed a set of principles for ethical AI, and Google has established an AI ethics board to review its AI projects. The potential benefits of Responsible AI for businesses include increased trust and credibility among customers, reduced legal and reputational risks, and improved decision-making.

The Impact of AI on Employment and Society

AI has the potential to impact employment and society significantly. Automation may lead to job losses in certain industries, while the increased use of AI in decision-making may lead to discrimination and bias. It is essential to address these potential negative consequences and ensure that AI is developed and deployed responsibly.

Ethical considerations must be at the forefront of the development and deployment of AI systems. This includes ensuring that AI systems are transparent, explainable, and unbiased, protecting individual privacy and data, and ensuring that AI systems are accountable for their decisions and actions.

Transparency and Explainability in AI

Transparency and explainability are critical in AI decision-making. It is essential to ensure that individuals understand how AI systems make decisions and that they can challenge and appeal these decisions. Best practices for ensuring transparency and explainability in AI systems include providing individuals with access to information about how AI systems work and how decisions are made.

The benefits of transparent and explainable AI for businesses and society include increased trust and credibility, improved decision-making, and reduced legal and reputational risks.

The Future of Responsible AI

Emerging trends and challenges in Responsible AI include:

- Developing AI systems that can learn from limited data.

- Using AI in decision-making in healthcare and criminal justice systems.

- Increasing the use of AI in the workplace.

The need for continued research and development of ethical AI practices is crucial. It is essential to ensure that AI is developed and deployed responsibly, with ethical considerations at the forefront of decision-making.

The potential for AI to positively impact society when used responsibly is significant. Responsible AI has the potential to improve healthcare outcomes, reduce discrimination and bias, and increase efficiency and productivity in the workplace.

Conclusion

Responsible AI is crucial for the future of technology. It is essential to ensure that AI is developed and deployed ethically and that its outcomes are accountable to individuals and society. Governments, businesses, and individuals must prioritize ethical considerations in developing and deploying AI systems. By doing so, we can ensure that AI benefits individuals and society while mitigating potential negative consequences.

As someone who has experienced the fear of being watched by AI at work, I know firsthand the importance of Responsible AI. It is essential to ensure that AI is developed and deployed ethically and that its outcomes are accountable to individuals and society. By prioritizing ethical considerations in developing and deploying AI systems, we can mitigate potential negative consequences and ensure that AI benefits individuals and the community. Let us work together to ensure that Responsible AI becomes the norm so that we can embrace AI’s benefits while protecting individual privacy and data.

Other Resources

Book: Responsible Artificial Intelligence

How to Develop and Use AI in a Responsible Way

In this book, the author examines the ethical implications of Artificial Intelligence systems as they integrate and replace traditional social structures in new sociocognitive-technological environments. She discusses issues related to the integrity of researchers, technologists, and manufacturers as they design, construct, use, and manage artificially intelligent systems; formalisms for reasoning about moral decisions as part of the behavior of artificial autonomous systems such as agents and robots; and design methodologies for social agents based on societal, moral, and legal values.